You are here: Foswiki>Main Web>Computing>AdministrativeSinglePrimaryCluster (11 Aug 2012, WilliamSeligman)Edit Attach

Nevis particle-physics administrative cluster

Background

A single system

In the 1990s, Nevis computing centered on a single computer,nevis1. The majority of the users used this machine to analyze data, access their e-mail, set up web sites, etc. Although the system (an SGI Challenge XL) was relatively powerful for its time, this organization had some disadvantages:

- All the users had to share the processing queues. It was possible that one user could dominate the computer, preventing anyone else from running their own analysis jobs.

- If it became necessary to restart the computer, it had to be scheduled in advance (typically two weeks), since such a restart would affect almost everyone at Nevis.

- If the system's security became compromised, it affected everyone and everything on the system.

A distributed cluster

In the 2000's,nevis1 was gradually replaced by many Linux boxes. Administrative services were moved to separate systems, typically one service per box; e.g., there was a mail server, a DNS server, a Samba server, etc. Each working group at Nevis purchased their own server and managed their own disk space. The above issues were resolved:

- Jobs could be sent to the condor batch system, so that no one system would be slowed down due to a user's jobs.

- A single computer system could be restarted without affecting most of the rest of the cluster; e.g., if the mail server needed to be rebooted, it didn't affect the physics analysis.

- If a server became compromised, e.g., the web server, the effects could be restricted to that server.

- Each working group would purchase and maintain their server with their own funds. However, the administrative servers had to be purchased with Nevis' infrastructure funds. That meant that the administrative servers would be replaced rarely, if at all.

- As a result, the administrative servers tended to be older, recycled, or inexpensive systems, with a correspondingly higher risk of failure.

- At one point there were seven administrative servers in our computer area, each one requiring an uninterruptible power supply, and each one contributing to the power and heat load of the computer room.

High-availability

Tools

Over the last few years, there has been substantial work done in the open-source community towards high-availability servers. To put it simply, a service can be offered by a machine on a high-availability (HA) cluster. If that machine fails, the service automatically transfers to another machine on the HA cluster. The software packages used to implement HA on our cluster are Corosync with Pacemaker. Another open-source development was virtual machines for Linux. If you've ever used VMware, you're already familiar with the concept. The software (actually, a kernel extension) used to implement virtual machines in Linux is called Xen. The final "piece of the puzzle" is a software package plus kernel extension called DRBD. The simplest way to understand DRBD is to think of it as RAID1 between two computers: when one computer makes a change to a disk partition, the other computer makes the identical change to its copy of the partition. In this way, the disk partition is mirrored between the two systems. These tools can be used to solve the issues listed above:- Six different computers, all old, could be replaced by two new systems.

- The systems could be new, but relatively inexpensive. If one of them failed, the other one would automatically take up the services that the first computer offered.

- The disk images of the two computers, including the virtual machines, could be kept automatically synchronized (as opposed to being copied via a script run at regular intervals).

- Virtual machines are essentially large (~10GB) disk files. They can be manipulated as if they were separate computers (e.g., rebooted when needed) but can also be copied like disk files:

- If a virtual server had its security broken, it could be quickly replaced by a older, un-hacked copy of that virtual machine.

- If a virtual server required a complicated, time-consuming upgrade, a copy could be upgraded instead, then quickly swapped with minimal interruption.

Configuration

The non-HA server

First, let's go over an administrative server that is not part of the HA cluster:hermes. This server provides the following functions:

These services are not part of the HA cluster because: - in the hopefully unlikely event that the HA cluster needs to be rebooted, it's nice to have the services on

hermesstill available during the reboot; - it takes a bit of time for the HA cluster to start up after, e.g., a power outage; again, it's nice to have the above services immediately available.

The HA cluster

The two high-availability servers arehypatia and orestes. For the sake of simplicity, hypatia is normally the "main" server and orestes the backup server.

Disk configuration

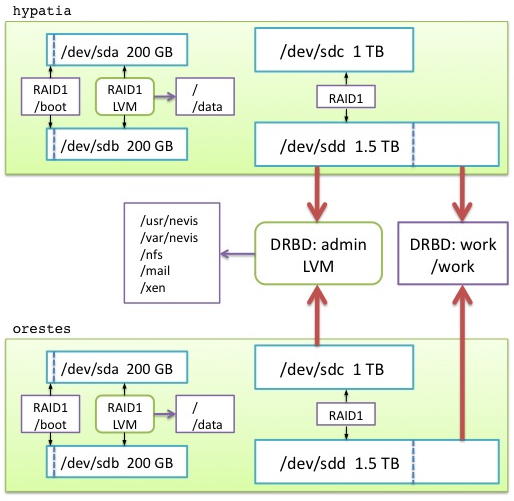

A sketch of the disk organization of the high-availability servers:

Text description:

- Each system has two 200GB drives, grouped together in a RAID1. These drives contain the operating system and any scratch disk space.

- Each system has one 1 TB drive, and one 1.5 TB drive.

- Main resource partition (admin):

- 1 TB of disk space from each of these drives is grouped in a RAID1.

- These two RAID1 areas are in turn grouped between the systems via DRBD into a single mirrored partition,

/dev/drbd1. - Using LVM, this partition is carved into directories which are exported to other machines, both real and virtual:

-

/usr/nevisis the application library partition exported to every other system on the Linux cluster. -

/var/neviscontains several resource directories; e.g.,/var/nevis/wwwcontains the Apache web server files;/var/nevis/dhcpdcontains the DHCP work files. -

/var/lib/nfscontains the files that keep track of the NFS state associated with exporting these directories to other systems. -

/mailcontains the work files used by the mail server, including user's INBOXes and IMAP folders. -

/xencontains the disk images of the Xen virtual machines.

-

- Scratch resource partition (work):

- The excess 0.5 TB is grouped between the systems into a single mirrored partition,

/work. - This area is used for developing new virtual machines, and for making "snapshots" of existing virtual machines.

- The excess 0.5 TB is grouped between the systems into a single mirrored partition,

- Main resource partition (admin):

Network configuration

Bothhypatia and orestes have two Ethernet ports: - Port

eth0is used for all external network traffic; VLANs are used in order for this port to accept traffic from any of the Nevis networks. - Port

eth1is used for the internal HA cluster traffic; the two systems are connected directly two each other using a single Ethernet cable. Both DRBD and Corosync have been configured to use this port exclusively.

hypatia and orestes, but this would not be useful if the HA services were moved from one system to the other. Among the HA resources (see below) that are managed by the systems are "generic" IP addresses assigned to the cluster. The IP name hamilton.nevis.columbia.edu always points to the system that offering the important cluster resources; the name burr.nevis.columbia.edu always points to the system offering "scratch" resources. Of course, if one of these systems goes down, then these two aliases will point to the same box.

In general, this means that if you need to access the system offering the main cluster resources, always use the name hamilton.

Resource configuration

In HA terms, a "resource" means "anything you want to keep available all the time." What follows is an outline of the resources configured for our HA cluster. In this outline, an indent means that the resource depends on one above it; for example, the mail-server virtual machine won't start if NFS is not available; NFS won't start if/var/lib/nfs is not available.

Most of the resources are controlled by scripts provided as part of the Pacemaker/Corosync package. The resources that begin with lsb:: (Linux standard base) are controlled by the standard scripts found in /etc/init.d/ on most Linux systems.

The entire configuration is spelled out in (excruciating?) detail on a separate corosync configuration page.

Services controlled by corosync:

main node:

Admin:Master = the DRBD "admin" partition's main image

(Constraint: +100 to be on hypatia)

MainIPGroup:

IP = 129.236.252.11 (hamilton = library = time = print)

IP = 10.44.7.11

IP = 10.43.7.11

LVM = makes the following logical volumes on the admin partition visible

Filesystem: /usr/nevis

Filesystem: /mail

Filesystem: /var/nevis

Filesystem: /var/lib/nfs

lsb::cups

lsb::xinetd (includes tftp and ftp)

lsb::dhcp (ln -sf /var/nevis/dhcpd /var/lib/dhcpd)

lsb::nfs

Xen virtual machines:

sullivan (mailing list)

tango (Samba)

ada (= www; web server)

franklin (= mail; mail server)

hogwarts (= staff accounts for non-login users)

Work:Master = the DRBD "work" partition's main image

Filesystem: /work

assistant node:

AssistantIPGroup

(Constraint: -1000 to be on same system as hamilton)

IP = 129.236.252.10 (burr = assistant)

IP = 10.44.7.10

mount library:/usr/nevis

lsb::condor

(Constraint: -INF for AdminDirectoriesGroup; if everything is running on one box, stop running condor)

On both systems: the STONITH resources.

References

These are the web sites I used to develop the HA cluster configuration at Nevis.Corosync/Pacemaker

http://www.clusterlabs.org/wiki/Main_Pagehttp://www.clusterlabs.org/rpm/

http://theclusterguy.clusterlabs.org/post/178680309/configuring-heartbeat-v1-was-so-simple

http://www.clusterlabs.org/doc/en-US/Pacemaker/1.0/html/Pacemaker_Explained/

http://www.ourobengr.com/ha

DRBD

http://www.drbd.org/home/feature-list/http://www.clusterlabs.org/wiki/DRBD_HowTo_1.0

http://howtoforge.com/highly-available-nfs-server-using-drbd-and-heartbeat-on-debian-5.0-lenny

Xen virtual machines

http://virt-manager.et.redhat.com/download.htmlhttp://wiki.xensource.com/xenwiki/XenNetworking

http://toic.org/2008/10/06/multiple-network-interfaces-in-xen/

http://toic.org/2008/09/22/preventing-ip-conflicts-in-xen/

Edit | Attach | Print version | History: r10 < r9 < r8 < r7 | Backlinks | View wiki text | Edit wiki text | More topic actions

Topic revision: r9 - 11 Aug 2012, WilliamSeligman

Copyright © by the contributing authors. All material on this collaboration platform is the property of the contributing authors.

Copyright © by the contributing authors. All material on this collaboration platform is the property of the contributing authors. Ideas, requests, problems regarding Foswiki? Send feedback